Upgraded eWindScreen and improved AX Soundscape Processing

Introduction

At Signia, we aim for perfect hearing – and we get closer to achieving it every day. Signia regularly makes updates to improve the hearing aid wearer’s hearing solution. These updates allow the hearing aid wearers to continuously experience, enjoy and understand more, empowering them to be the most brilliant version of themselves.

The latest updates of our successful Augmented Xperience (AX) platform include:

Upgraded eWindScreen – bringing our existing wind noise reduction to the next level by optimizing the processing according to our Augmented Xperience philosophy.

Improved AX Soundscape Processing – getting even more value out of our existing and patented own voice detection, which is part of Own Voice Processing 2.0. The own voice detection now serves as intelligent input to the AX Soundscape Processing to provide an even more precise mapping of the speech and noise sources in a communication situation.

Upgraded eWindScreen™

When spending time outdoors, many hearing aid wearers still struggle with a problem they did not have before wearing their hearing aids: the sound of fluctuating and annoying wind noise caused by the turbulent airflow around the hearing aid microphones. Besides mechanical housing optimizations, most hearing aids already have some type of a dedicated wind noise reduction algorithm. The challenge for such algorithms is to detect wind noise accurately and speedily and to reduce its annoyance.

As already indicated in the philosophy of the AX platform, we want our hearing aid processing to move towards augmented processing: by shaping all signals in the hearing aid wearer’s surroundings, thereby allowing the wearer to focus on the relevant ones. When exposed to wind noise, even when reduced in level, the random fluctuations in the noise will tend to dominate our perception, making the noise noticeable and annoying. According to Zwicker’s psychoacoustic annoyance model (Zwicker & Fastl, 2013), the more fluctuating the noise is, the more annoying it will be perceived.

Taking on the above-described challenge, the upgraded eWindScreen now introduces a precise wind smoothing algorithm that reacts faster to the fluctuations in the wind noise. Thereby, the upgraded eWindScreen not only limits the gain for wind noise but also reduces the fluctuations at each ear. This smooths the wind noise signal in both ears, and as a result, the wind noise is not only softer but also not dominated by such fluctuations anymore. Perceptually this reduction in fluctuation strength keeps the wind noise in the background where it loses its capacity to draw the attention of the hearing aid wearer and thus becomes less annoying. This allows the wearer to better engage in outdoor communication or other outdoor activities.

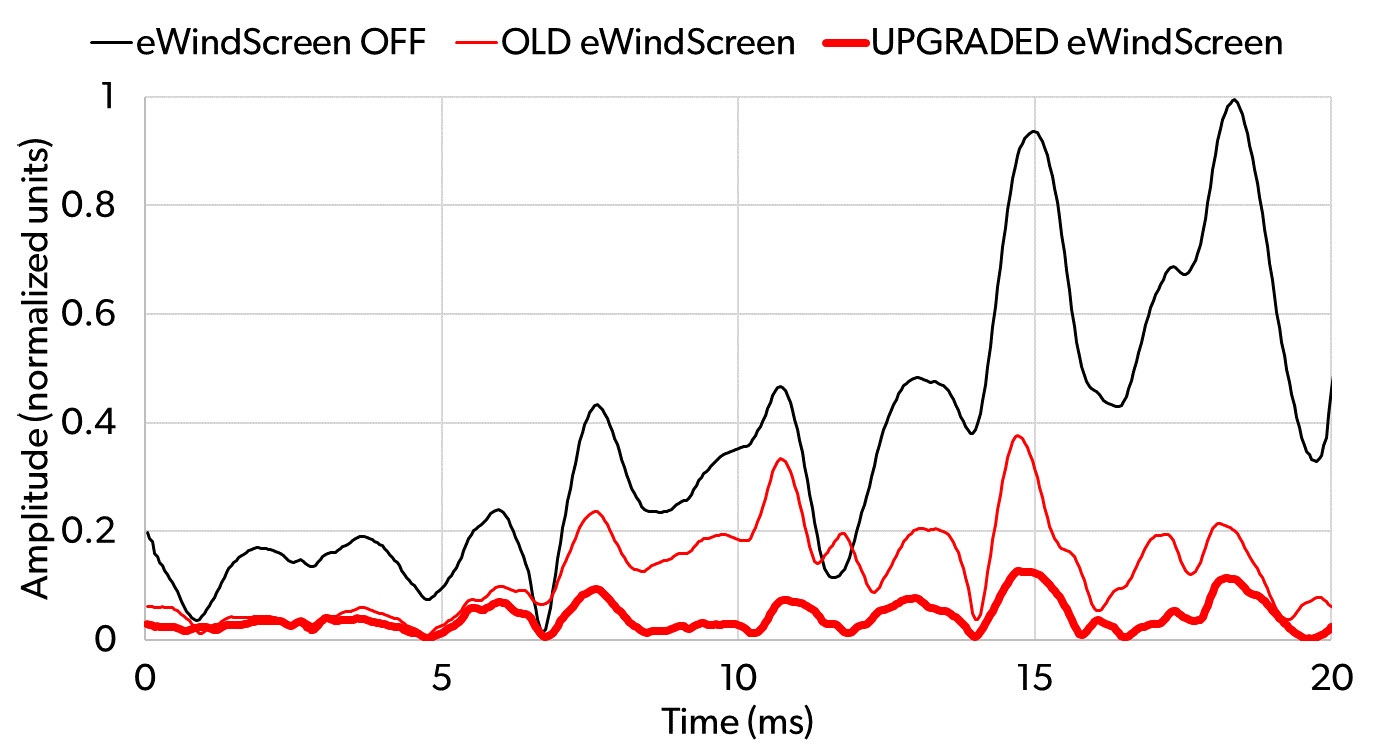

Figure 1 shows 20 ms of a wind noise signal at the output of the hearing aid in three different processing conditions: 1) eWindScreen turned off, 2) old version of eWindScreen turned on, and 3) upgraded version of eWindScreen turned on. In the first 7 ms of the signal, the wind noise is low, and in that part, the old and the upgraded eWindScreen offer the same level of attenuation. In the remaining part of the signal where the wind noise becomes louder, it should be noticed how the upgraded eWindScreen not only reduces the amplitude of the wind noise, but also substantially reduces the annoying fluctuations.

Figure 1. Comparison of a wind noise signal at the output of the hearing aid in three different processing conditions: eWindScreen off, old eWindScreen, and upgraded eWindScreen.

Improved AX Soundscape Processing

The most important need hearing aid wearers have to address and continuously focus on is the ability to engage in conversations with other people in noisy environments. Like everyone else, hearing aid wearers also want to actively contribute to a conversation and not only listen passively. Until now hearing aids have primarily used information about the environment to detail out sound sources and attenuate the noise and focus on the people speaking. In situations where both the hearing aid wearer and others are speaking in a noisy environment, the automatic steering in the hearing aid may be disturbed by the fact that the sound of the wearer’s own voice is louder than the sound of other voices, and, as a result, provide non-optimal support to the wearer by not maintaining consistently optimal microphone directionality. In addition to uncomfortable or distracting sound fluctuations, this may also in some cases lead to a drop in speech understanding.

Signia’s strong focus on mastering the full acoustic scene, taking all sound sources into consideration, inspired the inclusion of own voice detection in the analysis of the entire sound scene. Through extended use of the own voice detection function of Signia’s unique Own Voice Processing 2.0 (Signia, 2022), the application and focus of all the speech enhancement and noise attenuation algorithms can be adapted more precisely to the given communication situation.

Own Voice Processing 2.0 separates own voice from background noise and optimizes the processing of own voice without disturbing the processing of the background. However, in the improved AX Soundscape Processing, Own Voice Processing 2.0 is not only improving the wearer’s perception of own voice. It is now used as an additional sensor, contributing to the analysis of the entire sound scene. Knowing whether it is the wearer or someone else who is talking allows the right amount of support – from the advanced AX noise reduction and directionality systems – to be provided when the wearer engages actively in conversations in demanding sound environments. Thus, the own voice detection helps to provide the optimal processing of not only the wearer’s own voice but of the entire sound environment, including the voices that the wearer wants to attend to. As a result, the wearer will always experience a stable and optimal support, which allows them to engage in conversations in challenging sound environments.

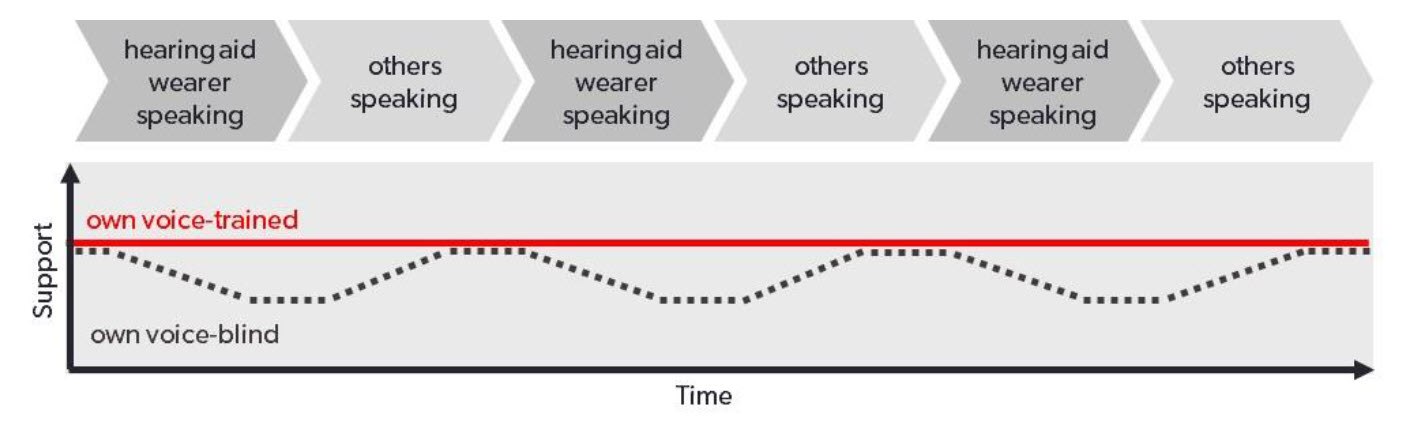

Figure 2 illustrates the difference between the support provided by an “own voice-blind” hearing aid and an “own voice-trained” hearing aid, respectively. The “support” axis indicates the activation of different supporting algorithms like noise reduction and directionality. While the “own voice-trained” hearing aids can provide a stable and appropriate amount of support, both when the wearer is speaking and when the wearer is listening to others speaking, the “own voice-blind” hearing aid fluctuates between different states of support because the sound of the wearer’s own voice is disturbing the adaptive systems. Especially in dynamic conversations with rapidly changing speakers or overlapping dialogue, this difference becomes important.

Figure 2. Comparison of support offered by an “own voice-blind” hearing aid and an “own voice-trained” hearing aid.

Although, from a high-level point of view, this update is just one detail within the entire AX Soundscape Processing, it is a detail that is important for both speech understanding, sound quality, and localization in real life conversations, and thus makes the AX support of the wearer even more powerful. For example, research by Schinkel-Bielefeld et al. (2018) indicates that the ability to localize is affected when switching from one type of directionality to another, and that listeners need some adaptation time to get used to such directionality switches. This problem is addressed by the improved AX Soundscape Processing.

References

Schinkel-Bielefeld N., Oreinos C. & Kamkar H.P. 2018. Improving localization in binaural beamforming for hearing aid wearers. Poster presented at the 10th Speech in Noise Workshop (SPIN), Glasgow, UK.

Signia 2022. Signia AX Own Voice Processing 2.0. Signia Backgrounder. Retrieved from www.signia-library.com/scientific_marketing/signia-ax-own-voice-processing-2-0.

Zwicker E. & Fastl H. 2013. Psychoacoustics: Facts and models: Springer Science & Business Media.